If You Can’t Use an Assessment to Make a Hiring Decision, Why Are You Using It to Make Development Decisions?

Walk into any HR conference or browse LinkedIn for five minutes, and you'll encounter a dizzying array of personality assessments promising better hiring, stronger teams, and more effective leaders. But many of these tools aren’t validated for selection and offer little predictive power for job performance.

The issue isn’t just validity. It’s also fragmentation. Different tools introduce different frameworks, languages, and disconnected data, making it nearly impossible to build a coherent view of talent across the organization.

The Real Cost of Disconnected Assessment Strategies

The consequences of talent decisions extend far beyond hiring. The cost of a bad hire can reach 30% of first-year earnings, but the impact of weak succession decisions, misaligned development investments, and inconsistent leadership standards compounds over time.

At the same time, talent leaders are being asked to do more with less: fewer resources, tighter budgets, and higher expectations for measurable impact.

Under those circumstances, disconnected assessment strategies create hidden costs:

Data that can’t be compared across employees or teams

Development efforts that don’t align with role requirements

Multiple “languages” of performance that confuse leaders about what success looks like

Inefficiency and missed insights

If your hiring data, development insights, and succession decisions don’t connect, you’re not maximizing the value of your assessment investment; you’re limiting it.

The Validity Question: What Separates Useful from Entertaining

Before evaluating specific tools, it’s important to understand what makes a psychometric assessment useful.

The gold standard is criterion-related validity, the statistical relationship between assessment scores and actual job outcomes such as performance, productivity, or training success.

Personality assessments vary significantly in their ability to predict performance. Research shows validity coefficients ranging from approximately 0.12 to 0.41 depending on the instrument, scoring method, and study design [5], [6].

This difference is not trivial. It’s the difference between decisions barely better than chance and decisions that meaningfully improve business outcomes.

Two factors drive this variation:

Empirical foundation: effective assessments are built on large, diverse datasets and demonstrate reliability, clear factor structures, and consistent validity across multiple job families and performance criteria.

Scoring methodology: tools that derive scoring from actual performance data outperform those based solely on theoretical assumptions.

The implication is straightforward: demand evidence, not testimonials, when evaluating any assessment. Be skeptical of vague claims about "insight," "awareness," or "team language."

Why Frameworks Matter

The issue isn’t simply choosing a “better” assessment. It’s choosing a framework that allows your data to connect. Without a shared foundation, assessment results remain isolated; useful in the moment, but difficult to scale, compare, or apply across the talent lifecycle.

This is where most assessment strategies break down. Organizations introduce new tools to solve a specific problem, such as hiring, development, and team building, without considering how these tools align. Over time, they accumulate multiple frameworks that don’t speak the same language.

More tools don’t create better insights. Better alignment does.

The Five-Factor Model: What the Research Consistently Shows

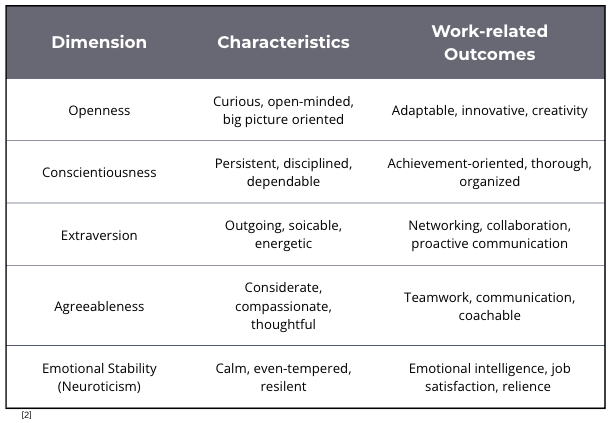

Among personality frameworks, the Five-Factor Model (FFM), or Big Five, stands apart in both scientific rigor and practical application. It organizes personality into five continuous dimensions:

Conscientiousness is the most consistent predictor of job performance across industries, roles, and levels [1]. The remaining dimensions show smaller but meaningful relationships depending on role requirements.

The real power of FFM assessment emerges when traits are used in combination. Composite profiles aligned to job demands consistently outperform single-trait interpretations and more accurately reflect how job performance actually works.

But the advantage of the FFM is not just its validity; it’s its structure.

Because traits are measured on continuous scales within a shared framework, results can be compared, aggregated, and applied across hiring, development, succession, and team effectiveness.

This allows organizations to move from isolated insights to talent analytics.

MBTI, DiSC, Enneagram, and Insights: The Evidence Gap

Many commonly used frameworks are popular because they are engaging, easy to understand, and accessible. The challenge is not popularity, it is evidence.

These tools are not designed to predict job performance and do not provide the type of criterion-related validity required for selection decisions.

The MBTI classifies individuals into 16 types, but has weak predictive validity for job performance. One study found it explained only 3.5% variance in job satisfaction [4].

DiSC and similar quadrant-based tools share the same structural limitations: categorical outputs that limit precision and comparability.

Insights builds on Jungian typology and provides development-focused insights, but does not publish evidence linking results to performance outcomes.

The Enneagram originates outside of modern psychometric science, with research showing mixed reliability and validity [3].

These frameworks tend to oversimplify meaningful concepts in ways that reduce their usefulness for decision-making.

For example, categorical outputs collapse meaningful differences. Someone just above a threshold is treated the same as someone at the extreme. That loss of precision limits both predictive power and practical application.

This issue is not whether these tools are popular or engaging. It’s whether the insights can be used to make better decisions.

A More Effective Approach: One Framework, Connected Data

The organizations seeing the greatest return from their talent investments are not using more tools. They are using fewer, better-aligned tools grounded in a common framework.

A more effective approach includes:

Standardize on a validated framework: Use FFM-based assessments as the foundation for your talent initiatives.

Build role-specific composite profiles: Align personality data to job requirements to improve both selection and development outcomes.

Connect data across the talent lifecycle: Ensure assessment results from hiring inform development, succession, and team decisions.

Reduce tool fragmentation: Limit the number of frameworks in use to avoid conflicting data and unnecessary complexity.

This is how organizations can move from isolated assessments to integrated talent intelligence.

Doing More with Less Starts with Better Data

Most organizations don’t need more assessments; they need their existing data to work harder.

In our upcoming webinar, “Doing More with Less: Connecting Your Assessment Data,” we’ll walk through:

Why type-based tools create fragmentation

What the FFM is and why it’s the gold standard

How to audit your current assessment ecosystem

What a connected assessment strategy looks like in practice

Conclusion

The assessment market rewards tools that are engaging, accessible, and easy to use. But talent leaders aren’t measured on engagement; they’re measured on outcomes.

The organizations seeing the greatest impact are not adding more assessments. They are building systems where their data connects; where hiring, development, and succession decisions reinforce one another rather than operating in isolation.

When your data connects, your decisions improve, and the value of every assessment increases.

References

[1] Barrick, M. R., & Mount, M. K. (1991). The Big Five personality dimensions and job performance: A meta-analysis. Personnel Psychology, 44(1), 1–26.

[2] Chamorro-Premuzic, T., & Furnham, A. (2010). The Psychology of Personnel Selection. Cambridge University Press.

[3] Hook, J. N., Hall, T. W., Davis, D. E., Van Tongeren, D. R., & Conner, M. (2021). The Enneagram: A systematic review of the literature and directions for future research. Journal of Clinical Psychology, 77(4), 865–883.

[4] Pittenger, D. J. (2005). Cautionary comments regarding the Myers-Briggs Type Indicator. Consulting Psychology Journal: Practice and Research, 57(3), 210–221.

[5] Salgado, J. F., Anderson, N., & Tauriz, G. (2015). The validity of ipsative and quasi-ipsative forced-choice personality inventories for different occupational groups. International Journal of Selection and Assessment, 23(1), 1–14.

[6] Van Iddekinge, C. H., Roth, P. L., Raymark, P. H., & Odle-Dusseau, H. N. (2012). The criterion-related validity of integrity tests: An updated meta-analysis. Journal of Applied Psychology, 97(3), 499–530.